Image Intake

Receive DICOM studies from hospital imaging systems. Keep raw clinical records immutable from our point of view.

A proposal for a sidecar system that receives eligible radiology studies, runs available AI diagnostic pipelines, presents suspicious findings to radiologists, and stores granular review data for audit, analytics, and future workflow optimizations. Official diagnosis and report submission remain in the hospital's existing workflow.

This site serves as a memo for technical product stakeholders. We present the system concept: what the radiologist sees, what the backend stores, where asynchronous work happens, what gets audited, and where decisions remain open.

Receive DICOM studies from hospital imaging systems. Keep raw clinical records immutable from our point of view.

Represent model outputs generically: classifications, confidence scores, bounding boxes, segmentation masks, etc. as well as more high-level summaries.

Let radiologists accept, reject, modify, ignore, or separately diagnose findings. We treat disagreement and correction as valuable first-class data we can use for future improvements.

| Principle | Design implication |

|---|---|

| Raw medical records are external source-of-truth data | We do not mutate raw DICOM or hospital EHR records. |

| Radiologist sign-off is the clinical source of truth | AI can suggest, summarize, draft, and prioritize. Final diagnosis and official report submission remain in the radiologist's existing workflow. |

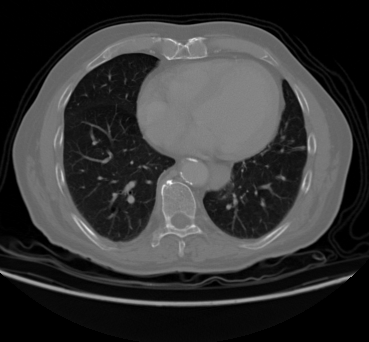

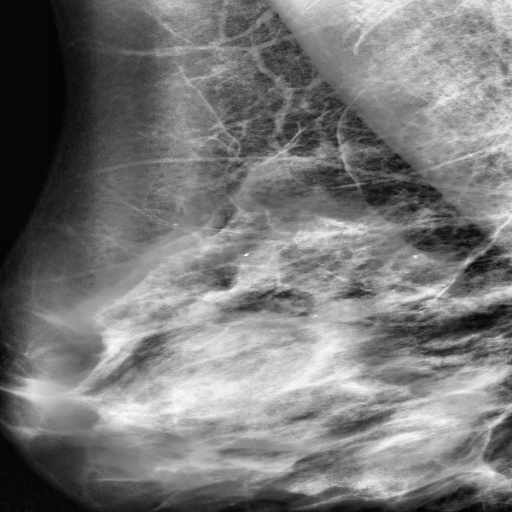

| AI review activates only where supported | The sidecar appears only for supported modality/model coverage, initially chest CT, mammography, and brain MRI examples. |

| Radiologist interactions are product data | Corrections to AI findings become analytics inputs for model monitoring, UX evaluation, QA sampling, and future training data curation. |

| Everything meaningful is evented | Study transitions, AI runs, annotation changes, sidecar draft edits, handoff/status updates, and access events are appended as structured records. |

Note: The sample data are PNG/JPG derivatives from Kaggle datasets, not production DICOM studies.